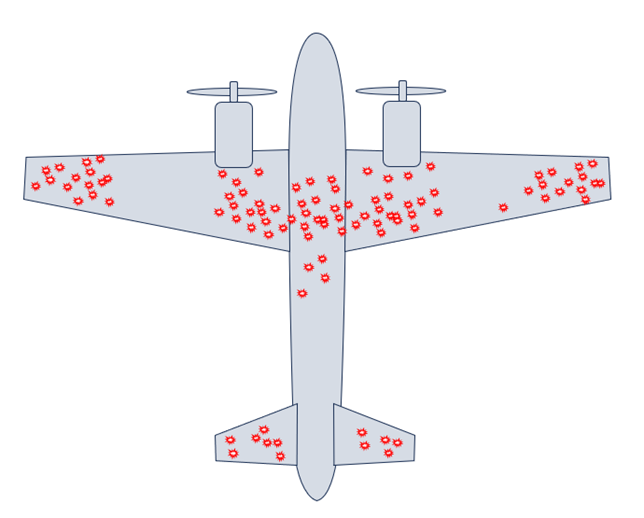

Figure 1: Compilation of bullet hole damage found on planes that returned from battle.

In analysis of data, bias can lead to an incorrect interpretation of the data if the analyst has a firmly-held preconceived notion of the study outcome, or if a complete dataset is not considered. The analyst may not be aware that they are biasing their data interpretation. A careful analyst will always question their study interpretations to make sure that bias has not crept in.

Mathematician’s Insight Led to Critical Breakthrough in World War II

An example of bias can be found in World War II. The Navy wanted to reduce airplane loss during battles. They thought more armor reinforcement would help protect the planes, but they needed to be strategic in where to place the armor; heavier planes used more fuel and were less maneuverable. They collected data on the accumulated bullet holes on planes returning from battle in Europe, and compiled it into an image like that shown in Figure 1. They noted that the bullet damage was largely segregated to the wings and fuselage, and there was little damage to the engines. Based on this analysis, they initially concluded that more armor was needed on the wings and fuselage.

A statistician in their group, Abraham Wald, looked at the data and had a different interpretation after considering the larger picture. He realized that a dataset was missing from the analysis, namely the planes that did not return from battle. All the planes that led to the data set in Figure 1 were able to return to base and, hence, did not have critical damage.

Wald initially speculated that bullets holes should be randomly distributed over the plane and that the dataset in Figure 1 was biased by considering only the planes without critical damage. He further speculated that planes that took hits to the engines were unable to return to base, suffering critical damage and, hence, were not part of the dataset. In consideration of the entire dataset, the conclusion would be to armor the engines, and that the wings and fuselage could withstand damage without crashing. Wald therefore looked for where there were no bullets to arrive at his conclusion.

Overcoming Bias in Medical Device Materials Analysis

Cambridge Polymer Group considers this approach when conducting analysis projects for clients. For instance, in extraction studies for ISO 10993-18, if we do not see potentially toxic compounds in an extract using one detector, we use another detector on the same extract to see if compounds are identified with a different detection mode. This approach is particularly necessary when we are looking at the bill of materials for a medical device to identify what detector will reliably pick up potential extractable materials. Just because one detector does not pick up a compound does not mean the compound is not there.

Cambridge Polymer Group is helping to organize a workshop on Best Practices for Precision and Bias for Medical Device Standardization, to be held in May of 2024 in Philadelphia. This workshop will discuss how to conduct interlaboratory studies on test methods to generate statistical information relevant for a precision and bias (P&B) statement in an ASTM test method, how to use P&B information, and when a P&B study is not appropriate.

A call for abstract submission will be going out later this year. Please contact Cambridge Polymer Group if you would like to present or attend the workshop.